A common notion in AI & cognitive science is that intelligence measures an entity’s general ability to achieve goals in a wide range of environments. This piece proposes a framework for evaluating an entity’s goal-pursuit capability. It examines the interplay between an entity, its environment, and the other entities with which it engages. The piece separates goal pursuit into two components: abstract planning and execution infrastructure.

Abstract Planning

Abstract planning is the capacity for an entity to take a set of inputs, process them and choose the optimal output to advance a goal. Abstract planning is a non-physical process: the entity’s latent informational architecture. Abstract planning closely aligns with the idea of raw intelligence.

Execution Infrastructure

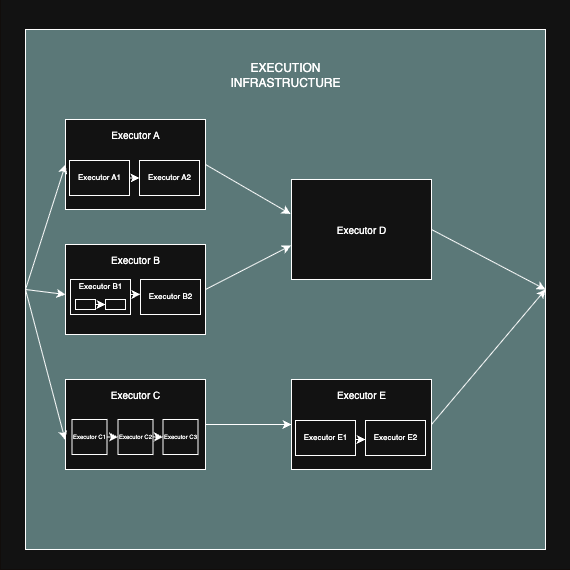

Execution infrastructure is the addressable set of external resources the planner can access or attempt to recruit to enact its logic in the world. It includes the architecture of these resources, their connectivity, and the reliability that its design affords. This is not a top-down control stack the planner owns; it is an external pool the entity can address, request, and coordinate, with success contingent on availability, incentives and protocols. Practically, it is composed of chains and nested hierarchies of executors; the tools an entity can leverage.

How these Systems Work Together

Abstract planning in isolation has no capacity to achieve goals. Without corresponding execution infrastructure, abstract planning otherwise remains inert. The abstract planning system outputs signals which attempt to activate the execution infrastructure. The activation of the execution infrastructure can involve setting off a chain of entities executing tasks. For example, an LLM might invoke a tool with a token; that tool may then call other tools, forming a cascade. Activation often invokes nested hierarchies of entities executing tasks. In hierarchical cases, a tool may itself be a program that orchestrates several APIs or functions; sub-processes nested within a larger process.

All abstract planning is ultimately grounded in a physical substrate. The abstract planning architecture emerges from the physical system that represents it. For example, consider that a human brain functions as a product of its biological components, the intelligent capabilities of the brain are a latent product of its biological makeup. Here the line between planning and execution blurs: the abstract planning system and its first physical execution layer have perfect fidelity. Alterations in the execution layer directly correspond to equivalent changes in the abstract planning system. We may view the first layer of execution as the communication interface the planner uses to address and recruit resources.

Formalising the Relationship

This intentionally simple formalisation provides context rather than rigour:

Where = probability of completing task k

= capability of the abstract planner for task k

= probability that the execution for task k succeeds

Multiscale Competency Architecture & Error Correction

If we extend the idea of nested entities to its fullest extent, we eventually get to small mechanical components, down to components whose behaviour is governed by the fundamental laws of physics. Trying to understand a system holistically becomes overly complex when we consider its components at such a granular level. It is instead more useful to consider entities that are higher up the hierarchy (at a higher level of abstraction) as holistic components and to attempt to generalise the rules that govern their behaviour. For higher-level entities, we ask: given conditions S, what is the probability the system completes task k? This framing is not only useful for observability; it illustrates how the planning system represents the executor. The abstract planning system cannot model all of the underlying complexity of the executor, so instead it must simplify its understanding of the executor into a more informationally dense form. In fact, much of the strength of the abstract planning system is in its capacity to create effective models of other entities.

Michael Levin’s multiscale competency architecture describes a similar architecture. Levin describes evolution exploiting a multiscale competency architecture (MCA), where subunits making up each level of organisation are themselves homeostatic agents. An interesting idea to borrow from MCA is that when a hierarchy of sub-components contains layers of sufficiently complex abstract planning systems, it allows the system to be built around the design principle of error correction. More sophisticated abstract planners reduce error by handling a broader set of conditions S, for a given task t.

Chained execution magnifies this need. If a goal requires n sequential steps with non-zero failure rates, overall success decays multiplicatively:

If we are to introduce uniform per-step reliability gain :

Where

Error correction raises reliability at each step, limiting exponential-like decay across the chain.

Each can itself be expanded at a finer scale as a product over its sub-steps; here we keep

coarse-grained for clarity.

Consider further that an entity’s success may not be binary. Rather, many entities may generate a range of outputs categorised by the set, O. Variations in O passed to the next entity in the chain will lead to variations in the set of downstream conditions, S’, and a system with more sophisticated abstract planning will be more capable of dealing with variations in S’.

Calling Executors – Suitability

Task success also depends on suitability between entity and task. One form is training suitability; some entities have been trained to complete certain types of tasks. This could be anything from an OCR system designed for handwritten digit recognition to an experienced bookkeeper’s proficiency at creating a balance sheet.

Another form is structural suitability: whether the task is more planner-leaning or executor-leaning. For example, in the act of sprinting, all the proficiency of an entity’s abstract planning system does only so much to overcome differences in features of the execution infrastructure, such as power-to-weight ratio and fast twitch fibre composition. Sprinting is a task more contingent on an entity’s execution infrastructure. Conversely, imagine a chess computer that must decide the best next move for a given position, the abstract planning that goes into determining which move to make is incredibly complex, yet to execute this move in a digital environment is rather simple. Chess computation is a task that is more contingent on an entity’s abstract planning. (In both examples, “simple” means simple at the chosen interface; the substrate’s complexity is coarse-grained into a reliability term, and our labels reflect the dominant bottleneck at this cut, not intrinsic simplicity.)

Calling Executors – Connectivity

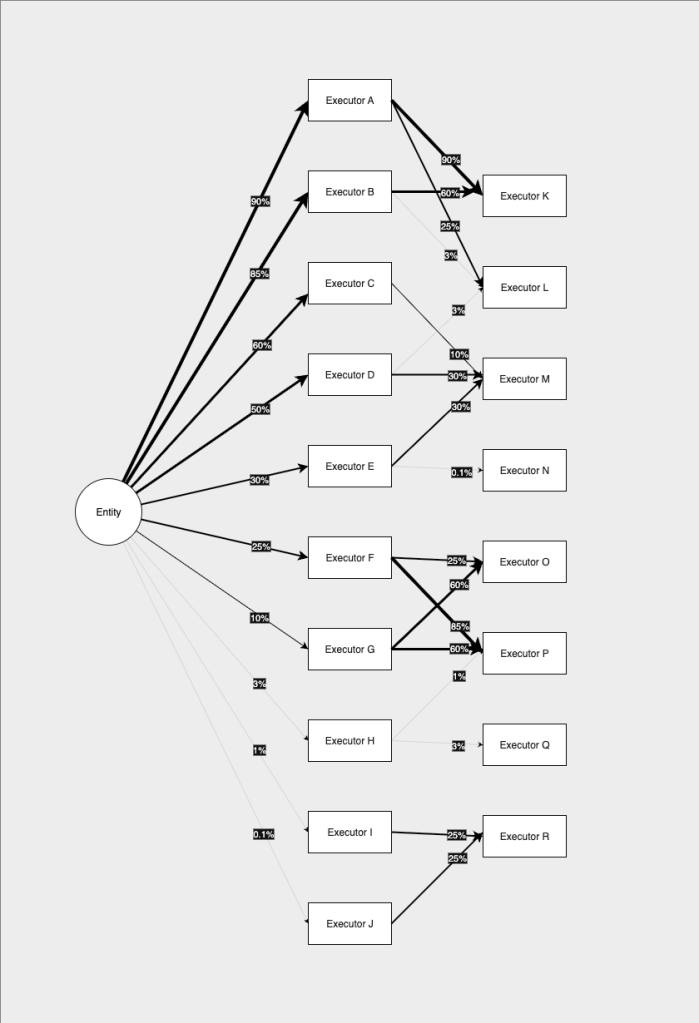

Execution infrastructure is a connectivity/network problem. Its capability depends on which entities can be called and how reliably, and on how easily resources can invoke other resources.

Much of the difference in capabilities between humans and LLMs can be attributed to the resources they can leverage and how easily they can leverage them. Humans clearly have a significant advantage in their access to resources in the physical domain and even then there are a wider range of resources humans can call upon with far less friction than an LLM currently faces. Enormous investment is flowing into LLM execution infrastructure, especially agents and tool-using environments.

The strength of an entity’s execution network can be explicitly represented by the set of resources available to it and the reliability with which it can influence those resources. In theory, an entity may influence far more entities than intuition suggests, even if that influence is minuscule or indirect. If depends on conditions S, then any other entity that perturbs S, even slightly, affects

. As the capacity to influence the necessary entities becomes vanishingly small, this can only be counterweighted by proportionally equivalent increases in abstract planning capabilities.

Given that

If decreases,

must increase proportionally to maintain

There is some speculation as to whether LLMs alone will provide the groundwork for AGI and/or Artificial Superintelligence. We might ask something like could an entity with the execution infrastructure of a current LLM possibly build a Dyson sphere? Given the limitations in its execution infrastructure, the LLM would need to have extraordinary abstract planning capabilities. This would involve proficiency in all the tasks that might relate to invoking entities with the requisite physical capabilities to build such a contraption on its behalf. It would need to compensate for its shortcomings in access to resources with inordinate amounts of intelligence. Theoretically, if abstract planning capabilities are unbounded, this could be possible, but it is also possible that there exists some information theoretic upper bounds on the capabilities of an LLM. It seems more plausible that superintelligent AI will be tractable via improvements in the execution infrastructure available to LLMs, than improvements in just their abstract planning capabilities.

Connectivity aligns with reinforcement learning: tools are part of the environment an agent must learn to utilise under uncertainty. While abstract planning shapes which sequences to attempt, the agent’s capabilities also hinge on the utility available from resource recruitment; the execution infrastructure. In multi-agent reinforcement learning, other agents may be included in the set of available resources. By extension, modelling the behaviour of other agents becomes critical.

Humans, AI & Hybrid Systems

Humanity itself functions as a higher-order entity with its own implicit goals. The goals of collective humanity are intractably difficult to fully understand as individual humans, and this topic is the subject of much debate and conjecture. As we build out increasingly capable AI systems, we augment humanity as an entity. In many ways humanity’s systems are becoming hybrid systems with AI. In fact, humanity is in many ways already acting in conjunction with many other non-human entities eg. biological, mechanical, digital… All of these other augmentations have caused significant changes to the reality faced by humanity. AI will likewise radically shift humanity’s reality.

The addition of AI will make humanity far more proficient as a collective goal-pursuing entity. It is critical that we deeply consider what our entity is optimising for and whether it is aligned with what we value as individuals. Should our system be optimising for goals that misalign with human interests, it may exacerbate many issues we face as it grows more efficient.

Limitations: Considering Inputs & Temporal Influences

This piece largely abstracts away the input channel. A fuller treatment would examine how entities ingest signals: which modalities they can translate, how modalities interact, and how temporal context is represented. Limits on input breadth constrain the space of feasible tasks. When comparing humans and LLMs, a major difference is the breadth and modality of context they can ingest. LLMs are generally more limited, though in some respects (e.g., context-window size under certain memory regimes) they can exceed humans. The comparison depends on what counts as memory infrastructure.

Finally, intelligence often operates in loops rather than single calls to infrastructure: plans update with new inputs, actions reshape the conditions, and entities progressively update memory to better equip themselves.

Leave a comment